System design principles are the backbone of building software systems that scale, perform, and evolve without chaos, helping teams make clear trade-offs across reliability, availability, efficiency, and maintainability from day one.

System design principles provide a shared language for structuring components, defining clean interfaces, and aligning architectural choices with real-world constraints like traffic growth, fault tolerance, and security threats.

In practice, applying system design principles means organizing systems into focused modules, reducing hidden dependencies, and designing for growth with strategies such as horizontal scaling, load balancing, caching, and asynchronous processing to keep latency low under load.

Just as importantly, resilience, failover, replication, and robust monitoring turn partial failures into graceful degradation rather than full outages, while privacy-by-design and end-to-end security protect data in motion and at rest. In this article, we will understand the purpose of system design and learn the core system design principles.

Key Takeaways

- Divide systems into focused modules like authentication and payments to enhance reusability and isolate changes.

- Use horizontal scaling and caches to handle growing loads efficiently, spotting bottlenecks early for optimal performance.

- Bundle data in classes with private access and abstract interfaces to hide complexities, boosting security and maintainability.

- Implement backups, mirroring, and fallbacks to minimize downtime, ensuring systems recover quickly from failures.

- Apply SOLID for extensible OOP and LoD to limit dependencies, reducing coupling and fostering adaptable, testable architecture.

What is the Purpose of System Design Principles?

When designing software systems, certain system design principles help engineers strike the right balance between functionality, technical requirements, and key qualities like scalability, reliability, and maintainability. These principles help ensure systems can handle real-world demands, adapt over time, and stay efficient without constant rework.

By applying solid system design principles, teams can make smarter trade-offs. For instance, they might focus on horizontal scaling to prepare for growth or introduce redundancy to boost fault tolerance. These decisions shape how different teams, like backend, DevOps, and product, can collaborate and weigh priorities as the system evolves.

At its core, system design is about building software that’s dependable, easy to expand, and straightforward to maintain. Techniques like load balancing, caching, and asynchronous processing are often baked in early to prevent future bottlenecks and failures.

Good design also emphasizes modularity and loose coupling, making code easier to test, deploy, and iterate on. This approach encourages reusability and teamwork while reducing issues like tight dependencies or unnecessary complexity.

Ultimately, following these principles helps keep downtime low and user experiences smooth. It also supports sustainable growth, allowing systems to scale efficiently without costly redesigns every time requirements change.

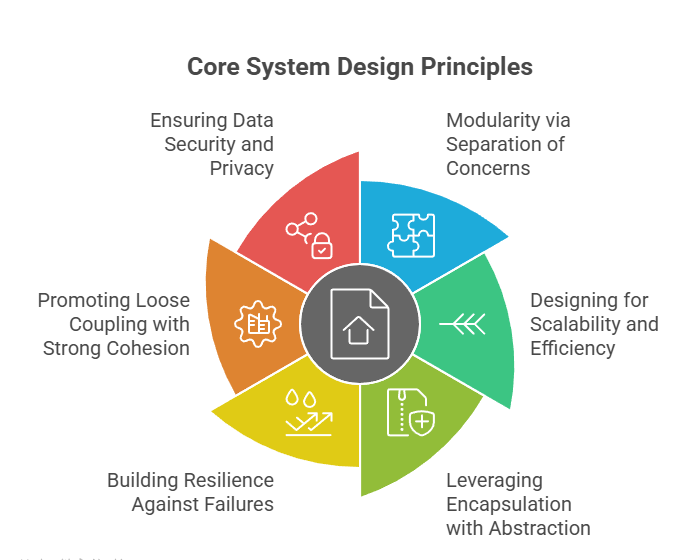

Core System Design Principles

Good software starts with core system design principles, which form the foundation for building anything complex. These principles slice large systems into sensible, bite-sized parts. Experience shows that following them results in software that’s simpler to use, quicker, and adaptable as requirements evolve.

Teams don’t simply follow rules; they build systems to withstand trouble like unexpected surges or outright breakdowns. Rather than fix issues afterward, the idea is to plan for what will happen. This approach benefits everyone involved, both those using the system and those maintaining it.

If you build things right from the start, they will work better in the long term. Consequently, costly fixes become unnecessary, while durability improves.

1. Modularity via Separation of Concerns

Separation of concerns promotes modularity by dividing software systems into smaller, self-contained modules, each responsible for a distinct aspect of functionality, thereby simplifying understanding, testing, and maintenance.

This approach enables developers to focus on individual components independently, minimizing interdependencies and facilitating the easier scaling or replacement of components without disrupting the entire system.

For instance, in modular programming, a payment module might handle transactions separately from a user authentication module, enhancing reusability and reducing the risk of cascading changes.

In an e-commerce application, separation of concerns through modularity divides the system into distinct modules like user authentication (handling login/validation) and payment processing (managing transactions/refunds), allowing each to evolve independently without affecting the other, which aligns with software design goals of maintainability and scalability.

This approach enhances reusability. For instance, the authentication module can be reused in a mobile app, while minimizing cascading changes, as updates to payment logic (e.g., integrating a new gateway) won’t require retesting user logins.

By localizing responsibilities, developers can focus on single concerns, reducing complexity and enabling parallel development in teams.

Scenario: Monolithic Approach (Without Separation)

Without modularity, a single function might blend authentication and payment, leading to tangled code where a security update in login breaks payment flows, increasing bug risks and maintenance effort. Here’s a simplified Python example of this violation, where everything is crammed into one handler:

# Monolithic: Mixed concerns (bad practice) def process_order(email, password, amount, card_details): # Authentication concern mixed with payment if not email or not password or len(password) < 8: return "Authentication failed" # Payment concern mixed in if amount <= 0 or not card_details: return "Invalid payment details" # Simulate processing print(f"Authenticating {email}...") print(f"Charging ${amount} to {card_details}...") return "Order processed" # Usage result = process_order("user@example.com", "pass123", 100, "1234-5678") print(result) # "Order processed"

Modular Approach (With Separation)

Applying separation of concerns, create independent modules: an AuthModule for credentials and a PaymentModule for transactions, communicating via simple interfaces like function returns or shared data structures.

This isolates changes. A new two-factor auth won’t impact payment gateways, and boosts reusability, as the payment module could serve multiple apps. Below is a refactored Python example using classes for clarity:

# Modular: Separate concerns (good practice) class AuthModule: @staticmethod def authenticate(email, password): # Focused on authentication concern if not email or not password or len(password) < 8: return False, "Authentication failed" # Simulate auth logic print(f"Authenticating {email}...") return True, "Authenticated" class PaymentModule: @staticmethod def process_payment(amount, card_details): # Focused on payment concern if amount <= 0 or not card_details: return False, "Invalid payment details" # Simulate payment logic print(f"Charging ${amount} to {card_details}...") return True, "Payment successful" def process_order(email, password, amount, card_details): # Orchestrate modules without mixing concerns is_auth, auth_msg = AuthModule.authenticate(email, password) if not is_auth: return auth_msg is_paid, pay_msg = PaymentModule.process_payment(amount, card_details) if not is_paid: return pay_msg return "Order processed" # Usage result = process_order("user@example.com", "pass123", 100, "1234-5678") print(result) # "Order processed"

In this modular design, each class handles one concern, facilitating unit tests (e.g., mock auth without payments) and easier extensions like adding refund logic solely to PaymentModule. Overall, this separation reduces system-wide regressions, aligning with principles like Single Responsibility for robust, evolvable software.

2. Designing for Scalability and Efficiency

Designing for scalability and efficiency involves crafting systems to manage rising demands effectively, using methods like horizontal expansion (adding servers) and vertical upgrades (boosting capacity) to sustain quick responses amid heavy usage.

This principle stresses spotting potential slowdowns early, applying tools such as traffic distribution, data caching, and non-blocking operations to spread out tasks and fine-tune factors like response speed and data flow rates.

For high-volume apps, storing popular data in caches can deliver near-instant replies as audiences expand.

Example

In a high-traffic e-commerce site, designing for scalability involves implementing caching to store frequently accessed data like product details in memory (e.g., Redis), reducing database queries, and enabling horizontal scaling by adding server instances behind a load balancer to distribute requests evenly.

Without caching, each user search hits the database, causing slowdowns under load; with it, responses achieve near-instant delivery, sustaining efficiency as traffic surges without vertical hardware upgrades alone. This turns bottlenecks into strengths, allowing the system to handle 10x users economically via auto-scaling groups that spin up nodes dynamically.

Let’s look at it with a simple code illustration. Consider a basic API endpoint fetching product info; without caching, it’s inefficient for repeated queries.

# Without caching - Database hit every time (inefficient for scale) from flask import Flask app = Flask(__name__) def get_product_from_db(product_id): # Simulate slow DB query return f"Product {product_id}: Details from DB" @app.route('/product/<int:product_id>') def get_product(product_id): return get_product_from_db(product_id)

Applying efficiency: Use Redis for caching, with non-blocking async if needed, to fetch once and reuse, supporting load-balanced horizontal scaling.

Focusing on these aspects delivers even resource use without excess spending, aiding steady progress and economical handling in changing software landscapes. They convert likely hurdles into advantages, allowing systems to flourish in fast-paced settings.

3. Leveraging Encapsulation with Abstraction

Encapsulation and abstraction reduce complexity by bundling data and behaviors into units, such as classes, while hiding internal details, ensuring controlled interactions through well-defined interfaces.

Encapsulation uses access modifiers such as private or protected to restrict direct access to object states, promoting data integrity and preventing unintended modifications in software engineering workflows.

Meanwhile, abstraction simplifies systems by exposing only essential features, allowing users or modules to interact without delving into implementation complexities, which streamlines development and enhances reusability.

Together, they minimize system complexity and boost maintainability, as changes to internal logic don’t affect external dependencies, making them indispensable for building layered architectures in modern software.

Example

In an inventory management app, encapsulation and abstraction hide database internals like SQL queries and connections behind a clean interface, protecting data integrity and allowing schema changes (e.g., switching databases) without impacting business logic.

This reduces complexity, prevents injection vulnerabilities via parameterized queries, and enhances reusability by exposing only essential methods like fetching inventory.

Without Encapsulation/Abstraction (Exposed Internals)

Business code directly manages queries, risking security and tight coupling.

# Bad: Raw access (vulnerable, coupled) import sqlite3 def get_inventory(user_id): conn = sqlite3.connect('inventory.db') cursor = conn.cursor() cursor.execute(f"SELECT * FROM inventory WHERE user_id = {user_id}") # Injection risk return cursor.fetchall() # Raw data, no hiding

With Encapsulation and Abstraction (Hidden Details)

Encapsulate via private attributes/methods; abstract with an interface for controlled, secure access.

# Good: Abstract interface and encapsulated class from abc import ABC, abstractmethod import sqlite3 from typing import List, Dict class DatabaseInterface(ABC): @abstractmethod def get_inventory(self, user_id: int) -> List[Dict]: pass class SQLiteDatabase(DatabaseInterface): def __init__(self, db_path: str): self.__db_path = db_path # Private: Hidden config def __connect(self): # Private: Encapsulated logic return sqlite3.connect(self.__db_path) def get_inventory(self, user_id: int) -> List[Dict]: conn = self.__connect() cursor = conn.cursor() cursor.execute("SELECT * FROM inventory WHERE user_id = ?", (user_id,)) # Secure rows = cursor.fetchall() return [{"id": row[0], "item": row[1], "quantity": row[2]} for row in rows] # Abstracted output # Usage: Simple, no DB exposure db = SQLiteDatabase('inventory.db') inventory = db.get_inventory(123)

This design isolates internals, enabling easy swaps (e.g., to MongoDB) and secure, maintainable code in layered systems.

4. Building Resilience Against Failures

Building resilience against failures guarantees system uptime and steadiness by planning for breakdowns through backups, copies, and smooth fallback options. This entails setting up error-spotting tools, recovery steps, and oversight to cut outage times, letting systems bounce back from glitches without crashing entirely.

In spread-out software setups, switchover processes and data mirroring over multiple points eliminate weak spots, keeping operations running through spotty issues.

Using this principle strengthens total system durability, lessening error effects and ensuring nonstop service in vital use cases. With thorough checks and watchful tracking, it shifts risks into controlled elements, building confidence in software stability.

Example

In a distributed e-commerce microservice for fetching product stock, resilience against failures like database outages is achieved through redundancy (mirrored databases), automatic failover (switching to a backup), and graceful degradation (returning cached data during issues), minimizing downtime to seconds via monitoring tools like health checks.

This setup spots errors early with logging, recovers via retries, and ensures uptime >99.9% by isolating failures, preventing cascades in high-stakes operations like order fulfillment.

Without Resilience (Direct Calls, Prone to Total Failure)

A naive implementation queries the primary database directly; if it fails (e.g., network glitch), the service crashes, halting operations and affecting users.

# Bad: No resilience - Single point of failure import sqlite3 # Simulate DB def get_stock(product_id): conn = sqlite3.connect('primary_db.db') # No backup cursor = conn.cursor() cursor.execute("SELECT stock FROM products WHERE id=?", (product_id,)) result = cursor.fetchone() conn.close() if result is None: raise Exception("DB failure - Service down") # Cascades failure return result[0] # Usage: Fails entirely on error try: stock = get_stock(123) except Exception as e: print(f"Error: {e}") # No recovery

This lacks oversight, leading to outages and lost revenue.

With Resilience (Retry, Fallback, and Monitoring)

Apply failover by trying a primary DB, then a mirrored backup; add retries for transient errors and a cache fallback for graceful degradation, monitored via simple logging for error detection. This ensures the service stays operational, returning partial data if needed.

# Good: Resilient with retry, fallback, and basic monitoring import sqlite3 import time import logging # For oversight logging.basicConfig(level=logging.INFO) cache = {} # Simple in-memory cache for fallback def get_stock_with_resilience(product_id, max_retries=3): # Fallback: Use cache first (graceful degradation) if product_id in cache: logging.info("Using cached data (fallback)") return cache[product_id] for attempt in range(max_retries): try: # Primary DB attempt conn = sqlite3.connect('primary_db.db') cursor = conn.cursor() cursor.execute("SELECT stock FROM products WHERE id=?", (product_id,)) result = cursor.fetchone() conn.close() if result: cache[product_id] = result[0] # Mirror to cache logging.info("Success from primary DB") return result[0] except Exception as e: logging.warning(f"Primary DB failed (attempt {attempt+1}): {e}") time.sleep(1) # Backoff try: # Failover: Backup DB conn = sqlite3.connect('backup_db.db') # Mirrored data cursor = conn.cursor() cursor.execute("SELECT stock FROM products WHERE id=?", (product_id,)) result = cursor.fetchone() conn.close() if result: cache[product_id] = result[0] logging.info("Success from backup DB (failover)") return result[0] except Exception as e: logging.warning(f"Backup DB failed (attempt {attempt+1}): {e}") time.sleep(1) # Ultimate fallback: Default or cached (if available) logging.error("All DBs failed - Using fallback") return cache.get(product_id, "Out of stock - Check later") # Non-blocking recovery # Usage: Continues despite failures stock = get_stock_with_resilience(123) print(stock) # Returns data or fallback

This design cuts outage impact via quick recovery (e.g., <5s retries), data mirroring for redundancy, and logging for proactive oversight, enabling nonstop vital services like real-time inventory.

5. Promoting Loose Coupling with Strong Cohesion

When pieces of software aren’t overly reliant on each other, yet still work toward specific goals, everything feels more stable. Loose coupling means each part can shift or grow without dragging the rest along for the ride. Strong cohesion, on the other hand, keeps every piece centered on one main purpose, so it stays clean and simple to understand.

If everything’s interwoven, even minor tweaks cause widespread issues. When components link well via defined connections, operations flow without a hitch. Strong cohesion helps by gathering all related tasks in one place, making the code easier to reuse, read, and test.

Building with flexibility yields robust systems, ones that can scale without complications. Consequently, you sidestep issues arising from inflexible structures, particularly as scale increases or deployment diversifies.

It’s the same reason small, independent microservices work so well. They talk to each other through defined channels instead of sharing hidden details. That separation keeps errors from spreading and lets teams work in parallel without stepping on each other’s toes.

6. Ensuring Data Security and Privacy

Keeping data safe and private isn’t something to tack on later; it has to be part of the build from day one. It’s about weaving protection right into the system so information stays secure and unwanted access is blocked before it even gets close.

That means using tools like AES or TLS to lock down data, whether it’s stored or moving around, and setting up access checks through things like OAuth, JWT, or even two-step logins to make sure only the right people get in.

Privacy goes a step further. It’s about collecting only what’s needed, hiding personal details when possible, and making sure APIs are tight enough to resist attacks. Keeping proper logs helps too, as they let you spot unusual behavior early and handle issues before they blow up.

If you plan for all this from the start instead of treating it as cleanup later, you avoid a lot of trouble. It not only meets security standards but also builds real trust with users. Over time, these forward-looking habits become a shield against new kinds of threats, turning security into a natural part of every system instead of an afterthought.

Related Reading: 25+ Microsoft System Design Interview Questions

5 System Design Principles for Software Engineering

These five principles offer practical tools for software engineers to craft clean, adaptable code, building on broader architectural foundations to ensure systems remain flexible and efficient over time.

They emphasize simplicity, responsibility division, and avoiding unnecessary complexity, helping teams avoid common pitfalls in development while aligning with goals like maintainability and scalability. In software engineering, applying these principles leads to codebases that are easier to extend, debug, and collaborate on, ultimately supporting long-term project success.

SOLID Principle

The SOLID principles (Single Responsibility, Open-Closed, Liskov Substitution, Interface Segregation, and Dependency Inversion) provide a structured approach to object-oriented design, promoting code that is modular and resilient to changes.

Single Responsibility Principle (SRP) dictates that a class should have only one job, such as separating user data handling from database operations, which simplifies maintenance by isolating changes.

Open-Closed Principle (OCP) allows extensions without modifying existing code, often through interfaces for adding new features like report types without altering core logic.

Liskov Substitution Principle (LSP) ensures subclasses can replace base classes without breaking functionality, as seen in refining hierarchies like distinguishing flying from non-flying birds to maintain behavioral consistency.

Interface Segregation Principle (ISP) favors small, targeted interfaces over broad ones, so clients depend only on relevant methods, reducing unnecessary dependencies in payment systems.

Dependency Inversion Principle (DIP) inverts high-level dependencies on abstractions, enabling swaps like logging services without core modifications. Together, SOLID principles foster extensible systems that evolve gracefully in software engineering.

DRY Principle

Don’t Repeat Yourself (DRY) urges avoiding code duplication by centralizing logic in reusable functions or modules, ensuring consistency and easing maintenance across the codebase. Duplication breeds errors during updates, but DRY mitigates this through abstractions like shared validation routines called from multiple endpoints.

This principle streamlines development, cuts redundancy, and boosts efficiency in large projects by implementing functionality once and referencing it everywhere.

In practice, DRY supports scalable software engineering by reducing file sizes and bug risks, though it requires balance to avoid over-abstraction. A common application is extracting common algorithms into libraries, preventing scattered implementations.

Example

Consider a scenario without DRY: repeated email validation in separate functions for user registration and profile updates, leading to duplication and potential bugs if rules change.

# Without DRY - Duplicated code (bad practice) def register_user(email): if not email or '@' not in email or len(email) < 5: return "Invalid email" # Registration logic... return "User registered" def update_profile(email): if not email or '@' not in email or len(email) < 5: return "Invalid email" # Update logic... return "Profile updated"

Now, applying DRY: Create a reusable validate_email function to eliminate repetition, ensuring consistency and easier updates (e.g., adding domain checks later).

# With DRY - Reusable validation (good practice) def validate_email(email): if not email or '@' not in email or len(email) < 5: return False return True def register_user(email): if not validate_email(email): return "Invalid email" # Registration logic... return "User registered" def update_profile(email): if not validate_email(email): return "Invalid email" # Update logic... return "Profile updated"

This DRY approach cuts redundancy, simplifies testing the validation once, and aligns with software engineering best practices for scalable systems. In larger applications, extend it to classes or libraries for even broader reuse.

While the DRY principle promotes cleaner and more maintainable code, over-applying it, by forcing reuse where logic may later diverge, can actually reduce flexibility and make future changes harder to manage.

Principle of Least Astonishment (PoLA)

The Principle of Least Astonishment (PoLA) guides designs toward intuitive behaviors, ensuring components like APIs or interfaces operate predictably without unexpected side effects for users or developers.

It minimizes surprises by aligning functionality with common expectations, such as consistent button actions in UIs or method returns in code, fostering trust and reducing learning curves. PoLA applies broadly to interfaces and user experiences, promoting readability and error prevention in collaborative environments.

Adopting PoLA enhances software usability and maintainability, as intuitive designs speed debugging and onboarding. For example, a sorting function that defaults to ascending order unless specified avoids astonishing results from ambiguous inputs.

Imagine a poorly designed function that modifies the input array in place during concatenation, surprising developers who assume immutability like standard string methods:

// Bad: Mutates the original array, astonishing users expecting a new result function concatenateLogs(logs, prefix) { for (let i = 0; i < logs.length; i++) { logs[i] = prefix + logs[i]; // Modifies input directly! } return logs; // Returns the mutated array } const originalLogs = ["Error occurred", "User logged in"]; const result = concatenateLogs(originalLogs, "INFO: "); console.log(result); // ["INFO: Error occurred", "INFO: User logged in"] console.log(originalLogs); // Also mutated! Unexpected side effect

This violates PoLA because it changes the caller’s data silently, leading to bugs in larger systems where originalLogs is reused elsewhere.

Adhering to PoLA (Predictable Behavior)

To follow PoLA, create a function that returns a new array without touching the input, mirroring familiar methods like Array.map() or String.concat(), so developers get the expected non-destructive output:

// Good: Returns a new array, no mutation—aligns with expectations function concatenateLogs(logs, prefix) { return logs.map(log => prefix + log); // Immutable operation } const originalLogs = ["Error occurred", "User logged in"]; const result = concatenateLogs(originalLogs, "INFO: "); console.log(result); // ["INFO: Error occurred", "INFO: User logged in"] console.log(originalLogs); // Unchanged: ["Error occurred", "User logged in"]

This design surprises no one, as it behaves like standard JavaScript utilities, making the code intuitive and easier to integrate into applications like web dashboards. In UI contexts, PoLA extends to elements like buttons: a “Save” button should persist changes immediately without hidden delays, matching user mental models from apps like word processors. By prioritizing such expectations, PoLA enhances usability and reduces debugging time in software projects.

You Aren’t Gonna Need It (YAGNI)

You Aren’t Gonna Need It (YAGNI) advises implementing only essential features based on current requirements, steering clear of speculative additions that complicate code without immediate value.

This principle combats over-engineering by focusing efforts on proven needs, shortening development cycles, and minimizing unused code that could harbor bugs. In agile software engineering, YAGNI encourages iterative builds, adding complexity only when user feedback demands it.

YAGNI keeps projects lean and adaptable, reducing technical debt while allowing quick pivots to real priorities. It shines in startups, where predicting future needs is uncertain, prioritizing viable products over hypothetical expansions.

Law of Demeter (LoD)

The Law of Demeter (LoD), also known as the Principle of Least Knowledge, limits an object’s interactions to its immediate “friends”, such as itself, method parameters, created objects, or direct components, to reduce coupling and prevent deep knowledge of internal structures in object-oriented designs.

This guideline avoids long method chains like objectA.getB().getC().doSomething(), which create fragile dependencies where changes in one class ripple across the system, promoting modular code that’s easier to maintain and refactor. By enforcing “talk only to immediate friends,” LoD enhances encapsulation and flexibility, allowing internal implementations to evolve without breaking external code.

In software engineering, LoD is particularly valuable in large systems like microservices, where it minimizes hidden dependencies, simplifies testing (e.g., mocking only direct collaborators), and supports scalability by keeping interactions local. It complements principles like SOLID by focusing on communication boundaries, ensuring objects assume as little as possible about others’ internals.

Simple Code Example (Python)

Consider a wallet system where a BankAccount shouldn’t directly access a User’s address details; violating LoD creates tight coupling, while adhering to it encapsulates access.

# Violating LoD: Deep chain, fragile (bad practice) class User: def __init__(self): self.address = Address("123 Main St") class Address: def __init__(self, street): self.street = street class BankAccount: def __init__(self, user): self.user = user def print_statement(self): # Chain: Knows too much about User and Address internals return f"Statement for {self.user.address.street}" # Usage: Breaks if Address changes account = BankAccount(User()) print(account.print_statement()) # "Statement for 123 Main St"

# Following LoD: Local interactions only (good practice) class User: def __init__(self): self.address = Address("123 Main St") def get_full_name_with_address(self): # Encapsulates internal access return f"User at {self.address.street}" class BankAccount: def __init__(self, user): self.user = user def print_statement(self): # Only talks to immediate friend (user) return f"Statement for {self.user.get_full_name_with_address()}" # Usage: Resilient to internal changes account = BankAccount(User()) print(account.print_statement()) # "Statement for User at 123 Main St"

This LoD-compliant design reduces fragility, making the system more adaptable and maintainable.

Conclusion

System design principles are like the quiet rules that keep good software from falling apart over time. They help developers build systems that can take a hit, grow naturally, and stay manageable even as everything around them changes. Ideas like modularity and encapsulation might sound old-school, but they’re what make software easier to fix and extend later on.

Then there are the handy rules of thumb, things like SOLID or YAGNI, that keep teams focused on what actually matters. They push you to make thoughtful choices instead of rushing into messy code that’s hard to maintain. It’s really about clarity, efficiency, and keeping security in mind without overcomplicating things.

Ready to Master System Design in Practice?

Having delved into essential principles like SOLID, DRY, PoLA, YAGNI, and LoD, it’s clear that mastering system design requires bridging theory with hands-on application to create robust, scalable architectures that excel in real-world scenarios.

If you’re ready to elevate your skills and prepare for FAANG+ interviews, our Learn How to Build a Scalable Ride-sharing App masterclass will teach you how to apply SOLID principles, Strategy and Observer patterns, and navigate architectural tradeoffs to design modular, extensible systems that handle dynamic fare logic, real-time updates, and growing demands.

Led by Soumasish Goswami, a Data Engineer at Replit with experience at AWS and LinkedIn in scalable ML infrastructure and observability, this session equips you with practical insights from production-grade projects, focusing on explainability and debugging in AI systems while mentoring for top Data and ML roles.

FAQs: System Design Principles

1. What are SOLID principles in system design?

SOLID principles (Single Responsibility, Open-Closed, Liskov Substitution, Interface Segregation, and Dependency Inversion) guide object-oriented design for modular, extensible software. They ensure classes focus on one task, extend without modification, and reduce coupling for maintainable systems.

2. How does the DRY principle improve code efficiency?

DRY (Don’t Repeat Yourself) eliminates duplication by centralizing logic in functions or modules, reducing errors and easing updates across codebases. It promotes reusability, cuts maintenance time, and aligns with scalable engineering practices.

3. Why apply the Law of Demeter (LoD)?

LoD limits object interactions to immediate dependencies, minimizing coupling and fragile chains like deep method calls. It enhances encapsulation, simplifies refactoring, and boosts system modularity in large applications.

4. When should you use YAGNI in development?

YAGNI advises implementing only required features now, avoiding speculative code that adds complexity without value. It keeps projects lean, reduces technical debt, and supports agile iterations based on actual needs.