Prateek Singhal brings 9+ years of industry experience across Microsoft and Samsung Electronics, spanning data engineering, software development, and technical leadership, with hands-on exposure to building data-driven and machine learning–enabled systems in large-scale production environments.

M. Prasad Khuntia brings practitioner-level insight into Data Science and Machine Learning, having led curriculum design, capstone projects, and interview-aligned training across DS, ML, and GenAI programs.

The transition from data engineer to machine learning engineer is a shift in ownership, not just tools, from pipelines to production ML systems.

Strong data engineering skills provide an advantage, but ML fundamentals and system design decide interview outcomes.

Production ML, including evaluation, monitoring, and retraining, is where credible MLEs are differentiated.

Success comes from thinking end-to-end and building reliable, data-first ML systems, not isolated models or notebooks.

The journey from data engineer to machine learning engineer is one of the most natural yet misunderstood role switches in modern tech. If you have spent years building reliable data pipelines, designing scalable systems, and making data production ready, you are already closer to machine learning engineering than you might think.

Switching from data engineer to ML engineer also comes with an increased salary. ML engineers in the US earn a median total compensation of $262,000 at top tech companies, compared to $155,000 for data engineers, according to the latest data. Data engineers are better positioned to close that gap than almost any other technical role because most of the required skills are ones they already use in production.

The best part about transitioning from data engineer to machine learning engineer is that you can build on your core strengths and extend them into model development, deployment, and ownership of ML systems in production. Many professionals exploring the data engineer to ML engineer path realize that their experience with data quality, infrastructure, and performance gives them a strong edge where most ML workflows break down.

A common misconception during the transition from data engineer to machine learning engineer is assuming the role is mainly about training models. In reality, modern ML engineering is dominated by production concerns.

Data quality, feature pipelines, latency, reliability, monitoring, deployment, and feedback loops matter far more than model choice. As machine learning systems become increasingly production-centric, engineers who understand data movement, scale, and observability are uniquely positioned to succeed.

In this guide, we lay out a clear roadmap to transition from data engineer to machine learning engineer, including a role comparison, the key skill gaps to close, a phased transition plan, and practical guidance to help you approach the switch realistically and with clear expectations.

Table of Contents

- Role Comparison: Data Engineer vs Machine Learning Engineer

- Skill Gap Analysis: Transitioning from Data Engineer to Machine Learning Engineer

- Roadmap to Transition from Data Engineer to Machine Learning Engineer

- Projects Professionals Should Build for Machine Learning Engineer Roles

- Interview Preparation for Machine Learning Engineer

- Common Mistakes When Switching from Data Engineer to Machine Learning Engineer

- Conclusion

Role Comparison: Data Engineer vs Machine Learning Engineer

At a surface level, data engineers and Machine learning engineers often appear to work with similar tools and infrastructure. In practice, the difference between the roles is not tooling but ownership, accountability, and how success is measured.

Both roles require strong engineering judgment, but the center of gravity is different. Data engineers optimize for data correctness and availability. Machine learning engineers optimize for decision quality and system behavior under uncertainty.

Core Responsibilities of a Data Engineer

A data engineer is responsible for ensuring that data systems are reliable, scalable, and production ready across the organization. The role focuses on building and maintaining data infrastructure and pipelines that enable analytics, reporting, and machine learning to operate on trustworthy data.

Core responsibilities typically include:

- Designing and maintaining batch and streaming data pipelines

- Ingesting data from multiple sources and transforming it into usable formats

- Managing data modeling, schema evolution, and data quality checks

- Orchestrating workflows using DAG based systems and handling backfills

- Optimizing data systems for performance, cost, and scalability

- Monitoring pipelines for failures, delays, and data inconsistencies

Data engineering work is largely centered around deterministic data systems. Given the same inputs and transformations, the output is expected to be predictable and correct. Most ambiguity in the role comes from scale, data volume, and infrastructure complexity rather than from downstream decision making.

Core Responsibilities of Machine Learning Engineer

A machine learning engineer is responsible for deploying, operating, and maintaining machine learning models in production environments. The role focuses on turning trained models into reliable systems that generate consistent and high quality predictions at scale.

Core responsibilities typically include:

- Building feature pipelines tightly coupled with model training and inference

- Deploying models to production and managing inference workflows

- Ensuring models meet latency, reliability, and performance requirements

- Monitoring model performance, data drift, and system health

- Designing retraining, rollback, and versioning strategies for models and features

- Handling model failures, degradation, and edge cases in production

ML engineering work deals with probabilistic systems. Even with the same code, model behavior can change as data evolves over time. Much of the ambiguity in the role comes from managing uncertainty, degradation, and feedback loops rather than from infrastructure alone.

Key Differences Between Data Engineer vs Machine Learning Engineer

Here are some key differences between data engineer vs machine learning engineer.

| Dimension | Data Engineer | Machine Learning Engineer |

| Primary Ownership | Data correctness, freshness, and reliability | Model correctness, performance, and degradation |

| Core Output | Trusted datasets and data pipelines | Deployed and monitored ML systems |

| Focus Area | Data movement, transformation, and availability | Model deployment, inference, and lifecycle management |

| Ambiguity Level | Lower, requirements are usually well defined | Higher, behavior changes with data and usage |

| Failure Mode | Broken or late data pipelines | Silent model decay, drift, or incorrect predictions |

| Evaluation Criteria | Pipeline stability, SLAs, data quality, cost efficiency | Model performance, latency, robustness, business impact |

| Relationship to Infrastructure | Owns and builds data infrastructure | Uses infrastructure but is accountable for model outcomes |

| Time Horizon | Ensures data works today and tomorrow | Ensures models remain valid over time |

Expert Insight

Data engineering and machine learning engineering are both fundamentally about building reliable, observable, and scalable decisioning systems. Data engineers operationalize data, while machine learning engineers operationalize intelligence built on top of that data.

Both roles share the same engineering mindset. Principles like idempotency, scalability, fault tolerance, observability, and cost efficiency are core to doing the job well. The tradeoffs are also similar. In production systems, both roles constantly balance speed, correctness, cost, and complexity.

Most importantly, both roles are data first. In data engineering and ML Engineering alike, system quality is ultimately determined by data quality. Bad data breaks dashboards just as reliably as it breaks models.

Advantages of Transitioning from Data Engineer to Machine Learning Engineer

A Data engineering background provides a strong (but incomplete) foundation for machine learning engineering. Data engineers have a clear advantage:

- A system level way of thinking that goes beyond notebooks and experiments

- Strong understanding of data reliability, quality, and correctness

- Comfort balancing tradeoffs between scale, latency, cost, and complexity

- Experience designing for failure, backfills, and observability in production systems

Despite these advantages, there are some challenges, such as:

- Shifting from deterministic data pipelines to probabilistic model behavior

- Reasoning about model performance, drift, and degradation over time

- Owning the full model lifecycle, not just data preparation

- Debugging failures where systems run successfully but produce poor predictions

In practice, this shows up clearly in interviews and on the job. Data engineers often excel at designing scalable data systems, but struggle when asked to explain why a model’s performance dropped, how to validate retraining, or when a model should be replaced versus rolled back.

The transition from data engineer to machine learning engineer works best for those who treat ML as a data first, system level responsibility rather than a simple extension of existing data tooling.

Question

Data Engineers bring a strong systems mindset to ML Engineering. They prioritize data reliability and quality, understand real-world tradeoffs between scale, latency, and cost, and design for failure with backfills and observability. These are some of the skills that translate directly to production ML systems.

Data Engineer vs ML Engineer Salary (2026)

The salary difference between the two roles is meaningful but not uniform. At the broad market level, Glassdoor puts the average ML engineer salary at $160,347 versus $132,376 for data engineers, a gap of roughly $28,000. At top tech companies the gap widens considerably. Levels.fyi, which captures total compensation including stock and bonus at companies like Google, Amazon, and Meta, shows a median of $262,000 for ML engineers against $155,000 for data engineers.

The numbers below reflect both sources so you have a realistic range depending on where you land.

| Role | Glassdoor average (2026) | Glassdoor range (25th to 75th) | Levels.fyi median total comp |

|---|---|---|---|

| Data Engineer | $132,376 | $103,700 to $170,729 | $155,000 |

| ML Engineer | $160,347 | $128,839 to $202,146 | $262,000 |

Sources: Glassdoor DE salary, Glassdoor MLE salary, Levels.fyi DE, Levels.fyi MLE. Data as of April 2026.

Related: Data Engineer vs Machine Learning Engineer Salary in 2026

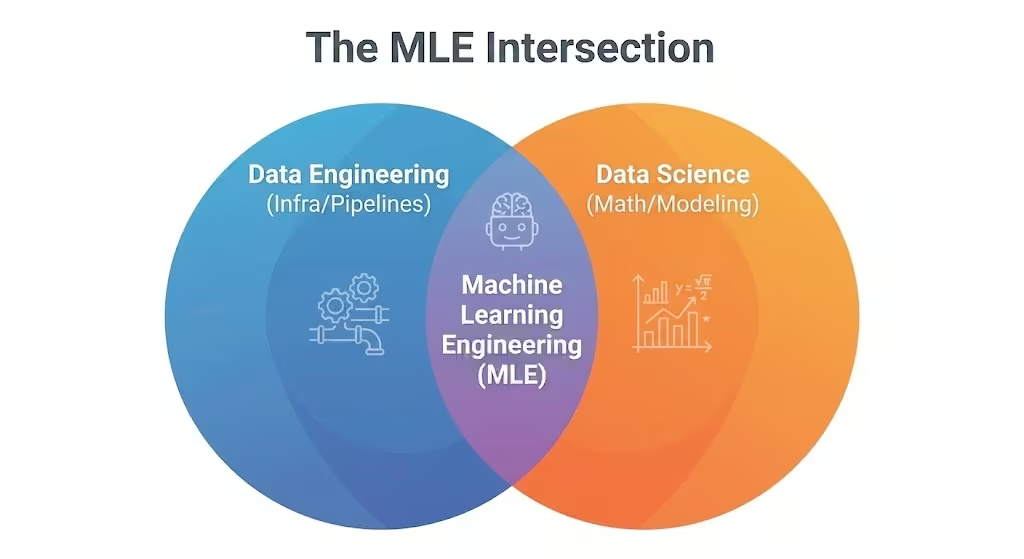

Skill Gap Analysis: Transitioning from Data Engineer to Machine Learning Engineer

One of the biggest mistakes people make when planning a data engineer to machine learning engineer transition is assuming they need to relearn everything from scratch. In reality, the skill gap is uneven. Some skills carry over directly, some are a tooling shift, and a small but critical set requires a genuine mindset change.

Let’s break down the skills in three clear buckets so you can focus your effort where it actually matters.

1. Skills That Carry Over From Data Engineer (Your Unfair Advantage)

These are skills that often take software engineers and data scientists years to internalize, but are already business as usual for experienced data engineers.

Data Pipelines & Orchestration

As a data engineer, you already design and operate complex pipelines using tools like Airflow, dbt, or Prefect. In ML Engineering, this work continues with feature pipelines, training workflows, and inference jobs. The mechanics are familiar: dependencies, retries, backfills, and failure handling. In practice, ML Engineering is dominated by data plumbing, and this is where data engineers are already experts.

Big Data Frameworks

Experience with Spark, partitioning strategies, shuffles, and memory management becomes a major advantage when models need to train on large datasets. Many ML candidates can build models on small samples, but struggle when training data scales to terabytes. Data Engineers already understand how distributed systems behave under load, which directly translates to scalable ML systems.

Cloud Infrastructure

Most data engineers are comfortable provisioning storage, managing IAM roles, and operating compute clusters on cloud platforms. Training jobs, batch inference, and feature generation use the same infrastructure primitives as data workloads. The workloads change, but the operational discipline does not.

Database Internals & Data Storage

Understanding how row oriented and column oriented systems work is critical when building feature stores and training datasets. Knowing how formats like Parquet behave and how query patterns affect performance gives data engineers an edge in designing efficient offline and online feature pipelines.

Expert Insight

2. Skills That Are Easier to Pick Up (The Tooling Shift)

These skills often look intimidating on job descriptions, but for data engineers they represent a shift in tools rather than a shift in thinking.

MLOps Platforms

Tools like MLflow, Kubeflow, or TFX are often described as complex, but conceptually they are DevOps for models. If you understand workflow automation, environment management, and deployment pipelines, these platforms are just new tooling wrapped around familiar ideas.

Feature Stores

Feature stores are essentially specialized data systems designed to serve pre computed features at low latency. Architecturally, this is not new. It mirrors patterns you may have already implemented using key value stores or low latency databases. The main learning curve is understanding how features are shared between training and serving, not the infrastructure itself.

3. Skills That Are Genuinely New (The Hard Part)

This is where the transition from data engineer to machine learning engineer requires a real mindset shift.

Statistical & Mathematical Intuition

Moving data reliably is not enough. ML Engineers must understand what the data represents and how it behaves. Concepts like distributions, variance, correlation versus causation, and statistical significance directly affect model quality and decisions.

Model Theory

You do not need deep theoretical mastery, but you must understand why different models behave differently. Knowing what a loss function represents, how gradient descent works, and why one model is more suitable than another is essential in interviews and real systems.

Experimentation & Probabilistic Debugging

In data engineering, jobs either succeed or fail. In ML Engineering, a model can run successfully and still be wrong. Learning to reason about accuracy, drift, skew, and silent degradation is often the most underestimated part of the transition.

Expert Insight

Data Engineer to ML Engineer Skill Transfer Matrix (Mapped to Tools)

| Skill area | DE background to MLE application | Gap to close |

|---|---|---|

| Pipeline orchestration Airflow, Prefect |

You build ETL DAGs with retries, dependencies, and scheduling. In MLE, the same patterns power training pipelines: data validation, feature generation, model training, evaluation, and registration as sequential jobs. | Learn ML-specific DAG patterns such as conditional model promotion and experiment branching. New tools: Metaflow, Kubeflow Pipelines. |

| Distributed processing Spark, Flink |

You process terabyte-scale datasets across partitioned clusters. In MLE, this becomes feature pipeline computation and large-scale model training where most candidates without a DE background hit a wall. | Minimal gap. Learn feature store semantics: point-in-time correctness and entity key joins. New tools: Feast, Tecton. |

| Streaming infrastructure Kafka, Kinesis |

You stream events from producers to consumers for real-time data delivery. In MLE, the same infrastructure powers streaming inference: consume live events, run model predictions, publish results downstream. | Learn inference latency budgets alongside stream consumers. New tools: Faust, Bytewax. |

| SQL and transformation SQL, dbt |

You model raw data into clean analytical tables. In MLE, the same skill applies to defining feature views, entity relationships, and materialization logic inside a feature store. | Learn how feature store queries differ from OLAP queries, particularly around time-travel joins and training vs serving consistency. |

| Table formats and versioning Delta Lake, Iceberg |

You manage schema evolution and time travel for data reliability. In MLE, the same capability underpins training dataset versioning: reproducing the exact dataset a model was trained on months after the fact. | Learn dataset versioning and experiment tracking concepts. New tools: DVC, LakeFS, MLflow Datasets. |

| Data quality validation Great Expectations, dbt tests |

You validate schemas, null rates, and distributions in pipelines. In MLE, the same discipline extends to detecting feature drift, training-serving skew, and prediction distribution shifts in production models. | Learn distribution-level validation beyond schema checks. New tools: Evidently, WhyLogs, Arize. |

| Cloud infrastructure Docker, K8s, Terraform |

You package and deploy data services in containerized cloud environments. In MLE, the same containerization skills apply directly to model serving endpoints and inference services. | Learn model serving frameworks and GPU resource scheduling. New tools: BentoML, Seldon, SageMaker Endpoints. |

| Pipeline monitoring Datadog, PagerDuty |

You monitor job failures, data freshness, and SLA breaches. In MLE, the same on-call discipline applies to model health: prediction drift, latency, throughput, and business metric degradation. | Learn to distinguish model degradation from infrastructure failure. New concept: shadow mode deployments and canary rollouts for models.S |

| ML fundamentals Genuinely new |

Not typically part of a DE role. ML engineers need to understand supervised and unsupervised learning, loss functions, regularization, and evaluation metrics well enough to reason about model behavior and debug production issues. | Target practical ML intuition, not research depth. |

| Experiment tracking Genuinely new |

DE pipelines are deterministic: same input, same output. MLE requires tracking hundreds of training runs with varying hyperparameters, metrics, and artifacts to understand what actually improved model performance. | Smaller surface area than it looks. New tools: MLflow, Weights and Biases. Roughly one to two weeks to become functional. |

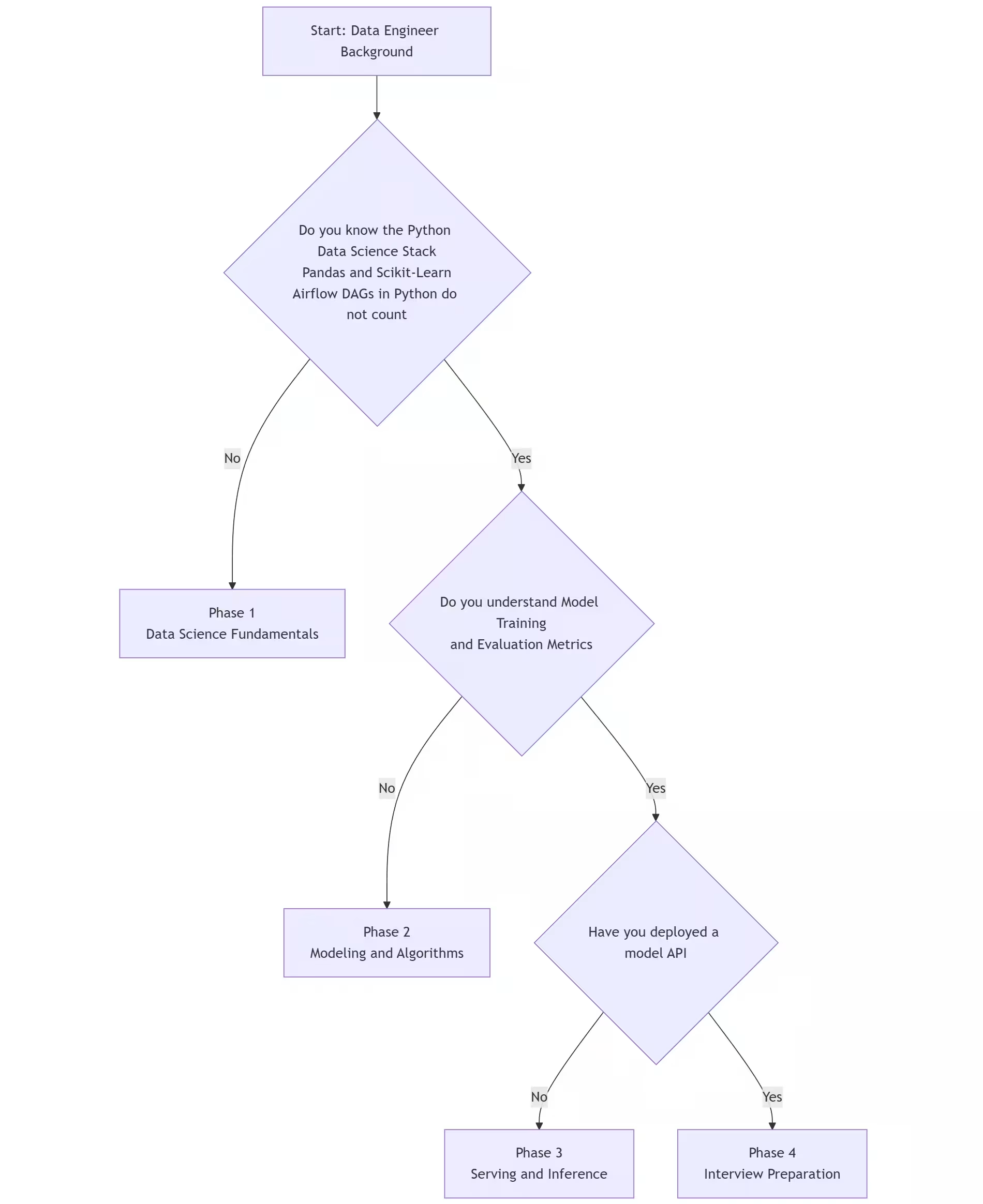

Roadmap to Transition from Data Engineer to Machine Learning Engineer

This roadmap is designed for data engineers who already understand infrastructure and scale. The goal is to build modeling intuition and ML system ownership, not to relearn engineering fundamentals you already mastered.

How to Prioritize What to Learn

Phase 1: Data Science Foundations (3-4 weeks)

This phase is about learning how data is consumed. Most data engineers are fluent in Python, but not in the Python data science stack. Writing Airflow DAGs or Spark jobs does not translate to comfort with Pandas, NumPy, or scikit-learn. This gap shows up immediately in interviews when candidates are asked to explore a dataset or reason about features.

Key skills to pick up include working fluently with Pandas dataframes, basic NumPy operations, and visualization libraries. You should be comfortable inspecting distributions, spotting outliers, handling missing values, and answering questions like “what does this dataset look like” before writing any pipeline.

The most important concept here is Exploratory Data Analysis. Learn to reason about data shape, skew, correlations, and leakage risks. Interviewers are looking for how you think about data, not how fast you write code.

Intentionally ignore distributed systems, Spark optimization, and scalability. Small datasets and notebooks are enough at this stage.

Related: Python for Data Engineers Moving into Machine Learning

TL;DR

Focus: You need to learn the “consumer” side of your pipelines. NumPy, Pandas, and Data Visualization (Seaborn).

Key Concept: Exploratory Data Analysis (EDA). Learn to look at data distributions, outliers, and correlations before writing a single pipeline.

What to Ignore: Complex distributed systems (you already know this). Focus purely on working with small data in a notebook.

Phase 2: Core Machine Learning & Modeling Intuition (4-5 weeks)

This phase is where many data engineers underprepare or overprepare in the wrong direction. You do not need advanced math or deep learning research. You do need a solid grasp of supervised learning fundamentals.

Question

Focus on regression, tree-based models, and basic neural network concepts. Understand when one class of model is preferable over another and what tradeoffs are involved.

Feature engineering is the most critical skill here. Learn how to transform raw inputs like timestamps, categorical variables, and text into meaningful numerical features. This is where many ML systems succeed or fail, and interviewers test this heavily.

You should also learn evaluation metrics deeply. Accuracy alone is not enough. Understand precision, recall, ROC-AUC, and how metric choice depends on business context.

The goal of this phase is to build a model end to end, tune it, and clearly explain why it performs the way it does. If you cannot explain a model’s behavior, you are not ready for ML Engineering interviews.

Intentionally ignore distributed systems, Spark optimization, and scalability. Small datasets and notebooks are enough at this stage.

Focus: Classical ML (Regression, Trees) and Deep Learning basics.

Key Concept: Feature Engineering. How to transform raw timestamps or text into numerical vectors that a model can understand.

Phase 3: Production ML, MLOps, and Feature Stores (2-3 weeks)

This is the most important and most underestimated phase of the transition. Here, you connect your new modeling skills with your existing engineering strengths. Focus on how models move from notebooks to production systems.

Key concepts include feature stores, model versioning, experiment tracking, and deployment patterns. Learn how training data differs from serving data and why training-serving skew breaks models in subtle ways. Understand model drift, data drift, and how monitoring is done in practice.

Build at least one small end-to-end ML system. It does not need to be large scale, but it must include feature generation, training, deployment, and monitoring.

Intentionally ignore distributed systems, Spark optimization, and scalability. Small datasets and notebooks are enough at this stage.

Focus: Connecting your new modeling skills with your old engineering skills. Build a Feature Store (using Feast) or a Distributed Training job.

Key Concept: Training-Serving Skew. Ensuring the data used for training matches the data available at inference time.

Phase 4: Interview Readiness and System Design (Ongoing)

Do not focus on learning about new tools at this stage. This phase is about synthesis. Focus heavily on ML system design interviews. As someone switching from data engineer to machine learning engineer, you will naturally do well in data ingestion, scalability, and reliability sections. Spend deliberate time practicing modeling choices, evaluation metrics, and retraining strategies.

Be prepared to discuss why a model degraded, how you would validate retraining, and when to replace versus roll back a model.

Do not dive deep into advanced theoretical ML or cutting-edge research papers. Hiring loops evaluate your ability to build and maintain production ML systems, not your ability to derive equations.

The strongest candidates position themselves as data-first, production-focused ML Engineers, not as model researchers.

Expert Insight

Projects Professionals Should Build for Machine Learning Engineer Roles

Projects matter more than certificates in ML Engineer interviews. Hiring managers look for evidence that you can own an ML system end-to-end, not just move data or train a model in isolation. The goal is to leverage your data engineering strength while clearly demonstrating modeling and ML lifecycle ownership.

What to Avoid: Pure Pipeline Projects

Some projects actively work against you during ML Engineer interviews.

- Standard ETL projects, such as moving data from S3 to Redshift, only prove data engineering competence, not ML Engineering readiness.

- Dashboarding or BI-style projects show analytics skills, not ML system design.

- Tutorial-driven notebooks that train a model once and stop do not demonstrate production thinking.

Interviewers often flag these as “pipe builder” portfolios.

Pitfalls to Watch For

Reference Project: Real-Time Feature Store and Prediction System

This is a strong, realistic reference project that aligns closely with real ML Engineer expectations.

Problem Statement

Build a real-time recommendation system that updates predictions based on user clickstream data within seconds.

Core Components

- Stream processing using Kafka or Spark Streaming to ingest live user click events. This highlights your scale and data engineering strength.

- A feature store built using Feast, with historical features stored in an offline store and real-time features served from Redis as an online store.

- Model training using offline feature data to build a recommendation model, demonstrating applied modeling competence.

- An inference service using FastAPI that queries the online feature store and returns predictions under a strict latency budget, for example, under 50 milliseconds.

- Monitoring focused on feature drift, detecting when live clickstream distributions diverge from training data.

- This project clearly demonstrates ownership across data, features, models, serving, and monitoring.

Alternative Project: Distributed Training Pipeline

- Focus: Optimizing the training process for massive datasets.

- Key Tech: Use Ray or PyTorch Distributed to parallelize the training of a large model across a cluster.

- Why: It solves a critical “pain point” for data scientists (long training times) using engineering skills.

Expert Insight

What These Projects Demonstrate

- You understand the Data-Centric AI paradigm.

- You can bridge the gap between Offline Training and Online Serving.

- You are not just a “pipe builder,” but a “system architect.”

Interview Preparation for Machine Learning Engineer

When transitioning from data engineer to machine learning engineer, the interview might feel a little harder. They test a different kind of reasoning. Many data engineers walk in confident because their systems skills are strong, and still fail because they underprepare the ML-specific parts that interviewers care deeply about.

This section breaks down how to prepare, what interviews actually look like, and where DE candidates most often fall short.

How to Prepare for Machine Learning Engineer Interviews

Preparation for ML Engineer interviews looks very different from preparing for data engineering roles, even though many candidates underestimate this shift. Based on real interview experiences across companies, most ML Engineer interviews still begin with heavy coding screens, often LeetCode medium to hard, even before ML is evaluated.

This means preparation must start with algorithmic coding under strict time pressure, typically solving one to two problems in 40-45 minutes without a runnable environment.

Where data engineers most often fail is assuming strong infrastructure experience will compensate for weak ML fundamentals. In practice, candidates are repeatedly evaluated on their ability to reason about model behavior, explain feature choices, and discuss evaluation metrics, even when the role is heavily production-oriented.

Many interviews explicitly test whether you can explain why a model’s performance dropped, how to validate retraining, or how to handle drift.

Question

- Distributed systems (Spark, Databricks, Delta Lake)

- Data modeling & quality

- Pipeline reliability & orchestration (DAGs)

- Cost optimization & performance tuning

- Cloud infrastructure (Azure, security, zero-trust)

- Debugging large-scale data issues

These are your strong pillars when you switch from data engineer to ML engineer.

A practical interview preparation timeline typically looks like this:

- First 2-3 weeks: Lock in coding fluency so problem-solving becomes mechanical. Practice LeetCode-style questions under time pressure and focus on writing correct, clean code while explaining your thinking clearly.

- Next 3-4 weeks: Strengthen ML fundamentals and model reasoning. Practice explaining ML concepts verbally rather than mathematically, including model behavior, feature choices, evaluation metrics, and common failure modes.

- Final phase: Focus heavily on ML system design. Practice explaining how machine learning pipelines differ from data pipelines in terms of long runtimes, parallelization, checkpointing, retraining, and failure handling.

Typical Interview Process and Structure for Machine Learning Engineer

Across companies, the machine learning engineer interview process follows a repeatable pattern, even though the number of rounds and emphasis may vary.

Most processes include:

- Recruiter screen: Background, role fit, motivation, and logistics

- Technical screen: Algorithmic coding and baseline ML readiness

- Interview loop (virtual or onsite): Multiple 45–60 minute rounds evaluating different dimensions of ML engineering capability

| What This Stage Evaluates | Stage | What Candidates Are Usually Tested On |

| Role fit, motivation, logistics | Recruiter Screen | Background walkthrough, prior ML or data experience, team alignment, availability |

| Baseline engineering and ML readiness | Technical Screen | LeetCode-style coding (medium to hard), Python fluency, basic ML concepts |

| End-to-end ML engineering capability | Interview Loop (Virtual or Onsite) | Coding, ML system design, ML fundamentals, behavioral and project deep dives |

Common Rounds in the Interview Loop Include

- Coding interviews focused on data structures and algorithms

- ML system design interviews covering end-to-end pipelines

- ML fundamentals or breadth rounds testing model behavior and evaluation

- Behavioral interviews centered on past projects and decision-making

- Project deep dives probing ownership and production experience

Common Interview Rounds and What They Evaluate

| Round Type | Primary Focus | What Interviewers Look For |

| Coding (DSA) | Problem solving under time pressure | Correctness, efficiency, edge case handling, communication |

| ML System Design | Designing production ML systems | Clear data → features → model → serving flow, scalability, failure handling |

| ML Fundamentals | Model understanding and reasoning | Bias-variance tradeoff, metrics, feature tradeoffs, drift |

| Production ML | Operating ML systems over time | Monitoring, retraining triggers, rollback vs replacement decisions |

| Project Deep Dive | Depth of ownership | Ability to explain design choices, tradeoffs, and failures |

| Behavioral | Collaboration and judgment | Conflict handling, prioritization, learning mindset |

Expert Insight

Interview Questions for Machine Learning Engineer

Before diving into the questions, it’s important to reset expectations about how ML Engineer interviews actually work. These interviews are not designed to test how many tools you remember or how well you can recite frameworks.

Instead, interviewers use a small set of recurring themes to evaluate depth of understanding, judgment, and ownership. Questions may appear in different rounds or be framed differently across companies, but they consistently map back to the same underlying domains.

The sections below organize real interview questions the way interviewers think about them, so you can focus on preparing the right kind of reasoning rather than memorizing answers.

1. ML Lifecycle & Model Management

This domain evaluates whether you understand how machine learning systems move from experimentation to production, and how they are operated, evaluated, and evolved over time. For data engineers transitioning into ML Engineer roles, this is often the first major gap.

Unlike data pipelines, models are not promoted based on job success or failure, but on performance, risk, and impact. Interviewers use these questions to test ownership and want to see whether you treat models as long-lived, versioned assets that must be compared, monitored, and rolled back safely, rather than as one-time training outputs.

- How do you decide when a model is ready to go to production?

- What information do you store alongside a trained model?

- How do you compare a new model against an existing production model?

- What happens if a newly trained model performs worse than the current one?

- How do you version models and ensure reproducibility?

What interviewers are listening for is not tool names, but whether you:

- understand evaluation-driven promotion

- treat models as lifecycle-managed assets

- can explain traceability, rollback, and reproducibility clearly

2. ML System Design & Pipelines

This domain focuses on designing end-to-end ML systems, not just deploying isolated components. These questions are intentionally open-ended and ambiguous because real ML systems rarely have clean boundaries or perfect requirements.

If you are switching from data engineer to machine learning engineer, this is where interviews diverge sharply from data pipeline design. ML pipelines often run for hours or days, are triggered by data rather than code changes, and must be designed to handle partial failures, retries, and checkpointing without restarting expensive computation.

- Design a pipeline that retrains a model weekly using new data.

- How would you automate retraining without manual approval?

- How do you prevent bad models from being deployed?

- How would you design a system that supports multiple models and versions?

- What changes when pipelines are triggered by data instead of code?

Interviewers are evaluating whether you can:

- reason end to end from data ingestion to serving

- design safeguards around automation and failure

- articulate tradeoffs in latency, cost, and reliability

- explain how ML pipelines differ fundamentally from data pipelines

Expert Insight

3. ML Fundamentals for Engineers

If you are switching from data engineer to machine learning engineer, this part can trip you up. Many candidates coming from data engineering backgrounds sound comfortable with ML terms, but struggle when asked to reason about model behavior under pressure.

Interviewers are not testing advanced math or research depth here. They are checking whether you understand why models behave the way they do and how that impacts real systems. These questions often appear casually during system design or project discussions, which is why they catch candidates off guard.

The goal is to see if you can explain ML concepts clearly, connect them to data and outcomes, and reason through tradeoffs without hiding behind formulas.

- Explain the bias-variance tradeoff in machine learning.

- What are precision and recall, and when would you optimize for one over the other?

- How do you deal with imbalanced datasets?

- How do you prevent overfitting in a model?

- How do you choose an evaluation metric for a given problem?

- What happens when training data is not representative of production data?

Interviewers are listening for whether you:

- can reason about model behavior, not just definitions

- understand how data characteristics affect outcomes

- can connect metrics to business impact

- stay grounded when answers are probabilistic, not binary

4. Project Deep Dive

This domain evaluates the depth of ownership rather than surface-level exposure. Interviewers use project deep dives to distinguish between candidates who contributed to a system and those who truly owned it. This is why building good projects for your portfolio is important.

Questions are usually open-ended and “follow-up-heavy”. Interviewers will keep drilling until they either hit genuine understanding or uncover gaps.

- Walk me through a recent technical project you have worked on.

- What is your proudest project and why?

- What tradeoffs did you make in this system and why?

- What broke in production, and how did you handle it?

- If you were to redesign this today, what would you change?

Interviewers are looking for clear ownership, decision-making rationale, and the ability to explain failures honestly.

5. Behavioral Interviews

Behavioral interviews are used to assess how you operate in real teams, especially under ambiguity and pressure. For ML Engineer roles, these questions often focus on collaboration, judgment, and learning, rather than generic culture fit.

Behavioral questions are frequently mixed into technical rounds rather than isolated.

- Tell me about a time you handled conflict at work.

- Describe a situation where a project did not go as planned.

- How do you prioritize when working on multiple competing tasks?

- Tell me about a time you disagreed with a technical decision.

- What are your greatest strengths and weaknesses?

Interviewers evaluate clarity of thought, accountability, and how you learn from mistakes.

Common Mistakes When Switching from Data Engineer to Machine Learning Engineer

The transition from data engineer to ML Engineer often fails for reasons that have very little to do with intelligence or effort. Most mistakes come from misplaced focus.

A common misstep is ignoring ML fundamentals and jumping straight into tools. Many candidates can wire together pipelines but struggle to explain bias–variance tradeoffs, evaluation metrics, or why one model behaves differently from another.

Closely related is a shallow understanding of model evaluation. Treating accuracy as a single success signal, without context, thresholds, or business impact, is a frequent red flag in interviews.

Another mistake is treating machine learning like a batch ETL job. Unlike data pipelines that finish in minutes, ML pipelines often run for hours or days. Candidates underestimate the importance of checkpointing, resumability, and long-running failure handling. Over-focusing on MLOps tools while under-focusing on core concepts leads to fragile systems that look impressive but break easily.

To avoid these pitfalls, start with fundamentals. Get comfortable reasoning about bias, variance, metrics, and failure modes. Think in systems, not notebooks. Build at least one production-style ML project that includes training, serving, monitoring, and retraining. Most importantly, learn how models fail in production. Leverage your data engineering strength to build reliable ML systems, not brittle demos.

Question

- Start with fundamentals like bias, variance, evaluation.

- Think in systems, not notebooks.

- Build at least one real production-style ML project.

- Learn how models fail in production.

- Leverage your DE strengths and learn to build reliable ML systems.

Conclusion

The transition from Data Engineer to Machine Learning Engineer is not a shortcut, nor a guaranteed upgrade. The core shift lies in moving from building deterministic data systems to owning learning systems that evolve, degrade, and require continuous judgment. Success in this transition depends less on tools and more on how well you understand model behavior, evaluation, and long-term system reliability.

This path is best suited for data engineers who enjoy system ownership, ambiguity, and production responsibility, and who are willing to move beyond pipelines into model lifecycle management. It is not ideal for those looking to avoid foundational ML learning, skip evaluation depth, or treat ML Engineering as a thin layer on top of existing data infrastructure.

Approached realistically, the transition from data engineer to machine learning engineer can unlock broader responsibility and stronger long-term career growth, but only when pursued with clear expectations and disciplined learning.

For many Data Engineers, the real challenge is expanding ownership from data movement to decision-making systems. Moving into ML Engineering means taking responsibility for how models are trained, evaluated, deployed, monitored, and retrained in production, not just how data flows through pipelines.

Interview Kickstart’s Advanced Machine Learning Program with Agentic AI is designed for engineers who already understand data and infrastructure and want to build credible, production-grade ML system ownership. The program emphasizes real ML pipelines, feature stores, model deployment, monitoring, and retraining, along with interview preparation aligned to how ML Engineers are actually evaluated.

If you’re looking for a guided, end-to-end path to move from Data Engineering into ML Engineering without guessing what to learn next, start with the free webinar to see how the program supports this transition.

FAQs: Transition from Data Engineer to ML Engineer

1. Can a data engineer become a machine learning engineer?

Yes. Data engineers already own the infrastructure ML systems run on. The genuine gaps are ML fundamentals and experiment tracking. Both have a much smaller learning surface than MLE job descriptions suggest.

2. How long does it take to transition from a data engineer to a machine learning engineer?

Four to six months of structured preparation is enough for most data engineers to become competitive for MLE roles. FAANG-level roles typically take six to nine months depending on your ML fundamentals baseline.

3. What skills does a data engineer need to become an ML engineer?

Pipeline orchestration, distributed processing, cloud infrastructure, and streaming systems transfer directly. The only genuinely new areas are ML fundamentals, feature engineering intuition, and experiment tracking with tools like MLflow or Weights and Biases.

4. How is a data engineer different from a machine learning engineer?

Data engineers build infrastructure that moves and stores data reliably. ML engineers build systems that train models, serve predictions, and monitor model health in production. The roles share more technical overlap than most job descriptions show.

5. Is transitioning from data engineer to ML engineer worth it financially?

ML engineers earn $160,347 on average versus $132,376 for data engineers on Glassdoor. At top tech companies, Levels.fyi puts the gap at $107,000 in median total compensation.

6. What does a machine learning engineer do that a data engineer does not?

ML engineers own model training pipelines, experiment tracking, inference serving, and model monitoring. They reason about model behavior, not just data movement. The system still needs a data engineer upstream to function.