- The transition from data scientist to machine learning engineer is a shift from model experimentation to full system ownership.

- Success depends on production thinking, lifecycle management, and engineering rigor, not just modeling skill.

- Interviews evaluate systems design, monitoring, and pragmatism as much as ML fundamentals.

- With deliberate preparation and realistic expectations, this move can unlock broader impact and long-term technical growth.

Many professionals consider moving from data scientist to machine learning engineer after a few years in the role. The motivation is usually practical, not trendy. They want to work closer to ML-powered products, build features that customers actually use, and operate at larger scale.

From experience, this shift is less about learning new models and more about changing focus. As a Data Scientist, your work often supports internal stakeholders. As a Machine Learning Engineer, you are building systems that serve external users and run continuously.

One non-obvious friction point is working inside an engineering squad. Shared codebases, release cycles, and cross-functional dependencies can feel very different from independent analytical work.

The transition from data scientist to ML Engineer is realistic. But it requires deliberate effort, especially around production systems and collaboration within engineering teams. In this guide, we lay out a clear roadmap to transition from data scientist to machine learning engineer, including a role comparison, the key skill gaps to close, a phased transition plan, and practical guidance to help you approach the switch realistically and with clear expectations.

- Role Comparison: Data Scientist vs Machine Learning Engineer

- Skill Gap Analysis: What You Must Learn to Move from Data Scientist to Machine Learning Engineer

- Detailed Roadmap to Transition from Data Scientist to Machine Learning Engineer

- Projects Professionals Should Build for Machine Learning Engineer

- Interview Preparation for Candidates Switching From Data Scientist to Machine Learning Engineer

- Interview Questions for Machine Learning Engineer

- Common Mistakes When Switching from Data Scientist to Machine Learning Engineer

- Conclusion

1. Role Comparison: Data Scientist vs Machine Learning Engineer

Understanding the difference between these roles is critical before committing to the transition from data scientist to machine learning engineer. On the surface, both roles build and work with machine learning models. In practice, however, the scope of ownership, engineering depth, and evaluation criteria are meaningfully different.

Core Data Scientist Responsibilities

Data Scientists typically operate upstream in the decision-making process. Their work is often exploratory, ambiguous, and tightly connected to business questions. The core responsibilities of a data scientist include:

- Frame unclear business or product problems into analytical questions

- Define success metrics and trade-offs before modeling begins

- Perform experimentation, predictive modeling, or causal analysis

- Quantify uncertainty, assumptions, and risks in recommendations

- Translate findings into insights for stakeholders

- Influence product, strategy, or operational decisions

- Evaluated on rigor, reasoning quality, defensibility, and measurable impact

The role emphasizes analytical depth, hypothesis-driven thinking, and strong communication. Ownership usually ends at recommendations rather than long-term system maintenance.

Core Machine Learning Engineer Responsibilities

Machine Learning Engineers operate closer to production systems and customer-facing applications. Their work focuses on making ML reliable, scalable, and maintainable. The core responsibilities of a machine learning engineer include:

- Design and implement ML systems that run in production

- Build training, validation, and inference pipelines

- Deploy models using APIs or batch workflows

- Optimize systems for latency, throughput, and cost

- Monitor performance, data drift, and system failures

- Collaborate within engineering squads on shared codebases

- Evaluated on system reliability, scalability, and business impact over time

The role emphasizes ownership, iteration, and long-term maintenance. Responsibility does not end at model accuracy. It extends to uptime, robustness, and user experience.

| Dimension | Data Scientist | Machine Learning Engineer |

|---|---|---|

| Primary Focus | Insights and modeling | Production systems and scale |

| Stakeholders | Internal teams | Often external users |

| Work Style | Exploratory and analytical | Structured and engineering-driven |

| Success Metric | Model accuracy and business insight | Reliability, latency, uptime, iteration |

| Scope of Ownership | From problem framing to recommendation | From MVP to scaled, monitored system |

| Team Environment | Semi-independent with business stakeholders | Embedded in cross-functional engineering squads |

Advantages of Transitioning from Data Scientist to ML Engineer

Professionals moving from data scientist to machine learning engineer bring structural advantages that are highly relevant in real ML Engineering interviews and on-the-job performance. While engineering rigor must be developed deliberately, the analytical and modeling foundation is often already strong and differentiating.

Strong statistical intuition and experimentation mindset

Data Scientists are trained to think in terms of distributions, bias-variance trade-offs, and uncertainty. They understand how to evaluate models beyond surface-level metrics. This becomes a real asset in ML Engineering, especially when debugging model performance in production or deciding whether performance drops are due to data drift, feature issues, or modeling assumptions.

In interviews, this often shows up as deeper reasoning, and strong candidates do not just say what model they would use. They also explain why, under what assumptions, and what trade-offs they are making.

Problem framing and hypothesis-driven thinking

Many engineers can implement models. Fewer can clearly frame the problem before building anything. Data Scientists are used to defining success metrics, clarifying constraints, and aligning on trade-offs before execution. In ML Engineering roles, this translates into better system design decisions. Instead of overengineering early, they think in terms of MVPs, measurable impact, and iterative improvement. This systems-level thinking is one of the clearest advantages when moving from data scientist to ML engineer.

Communication and cross-functional alignment

Data Scientists often work closely with product managers, business teams, and leadership. They are comfortable explaining complex concepts in simple language and defending technical decisions with clarity.

2. Skill Gap Analysis: What You Must Learn to Move from Data Scientist to Machine Learning Engineer

If you are serious about moving from data scientist to machine learning engineer, this is the section that matters most. The gap is not about learning five new ML algorithms. It is about developing engineering depth and production thinking. To make this clear and less overwhelming, we can group the skills into three buckets.

Bucket 1: Skills That Carry Over (Your Foundation)

These are strengths you likely already have.

Statistical intuition

You understand distributions, bias-variance trade-offs, hypothesis testing, and uncertainty. This becomes extremely valuable when debugging model performance in production. Many engineers can write pipelines. Fewer can diagnose why a model’s performance degraded.

Model training and experimentation

You already know how to select features, compare algorithms, and interpret metrics such as AUC, F1, or RMSE. That core modeling skill remains relevant.

Data wrangling and SQL

You are comfortable working with messy data using Pandas or SQL. The shift is not learning data manipulation from scratch. The shift is learning how to automate and productionize it.

Often it’s not the ML model development itself that poses the challenge, but rather deployment. When it comes to deployment it’s understanding the release process, working within a product roadmap, being ready for things that might go wrong.

Bucket 2: Skills That Are Easier to Pick Up (Tooling Expansion)

These skills require effort, but they are incremental rather than transformational.

Advanced SQL and data engineering basics

You may already write SQL for analysis. The next step is writing performant SQL for pipelines, handling larger datasets, and thinking about data modeling rather than one-off queries.

Cloud fundamentals

If you have used tools like S3 or BigQuery, you already have exposure. Learning how to spin up compute instances, manage storage, or use managed ML services is a natural extension.

Version control and collaboration

Using Git properly, working with branches, resolving merge conflicts, and reviewing pull requests are practical skills that become daily requirements. These skills expand your toolkit, but they are not the core differentiator.

Bucket 3: Production & Systems Skills (Primary Differentiator)

This is the real gap. The single capability that most clearly separates strong candidates is systems thinking. Solving a problem once is not enough. You must think about how it evolves, scales, fails, and improves over time.

Model serving patterns

You need to understand the difference between batch inference and real-time inference. When should predictions run overnight versus in under 100 milliseconds? How do you wrap a model inside an API? How do you handle concurrent requests?

Latency, throughput, and scaling

In production, performance matters. You must consider how many users the system supports, how quickly predictions must return, and how infrastructure scales under load.

CI/CD and containerization

Tools like Docker isolate dependencies and make environments reproducible. CI/CD pipelines ensure that changes are tested and deployed safely. “It works on my machine” is not acceptable in ML Engineering.

Monitoring, drift, and retraining

What happens when input data changes? How do you detect data drift? When should the model be retrained? How do you prevent silent degradation?

Failure handling

What happens if the feature store goes down? If the model server crashes? If bad data enters the pipeline? Strong ML Engineers design systems that fail gracefully and recover predictably.

3. Detailed Roadmap to Transition from Data Scientist to Machine Learning Engineer

The objective of this roadmap is to build production engineering depth on top of your existing modeling foundation without drifting into unnecessary research topics or unrelated software engineering domains.

As a Data Scientist, you already understand experimentation, metrics, and predictive modeling. What you need to build now is software engineering rigor, deployment capability, and systems thinking. This roadmap moves you from model development comfort to production ownership and scale.

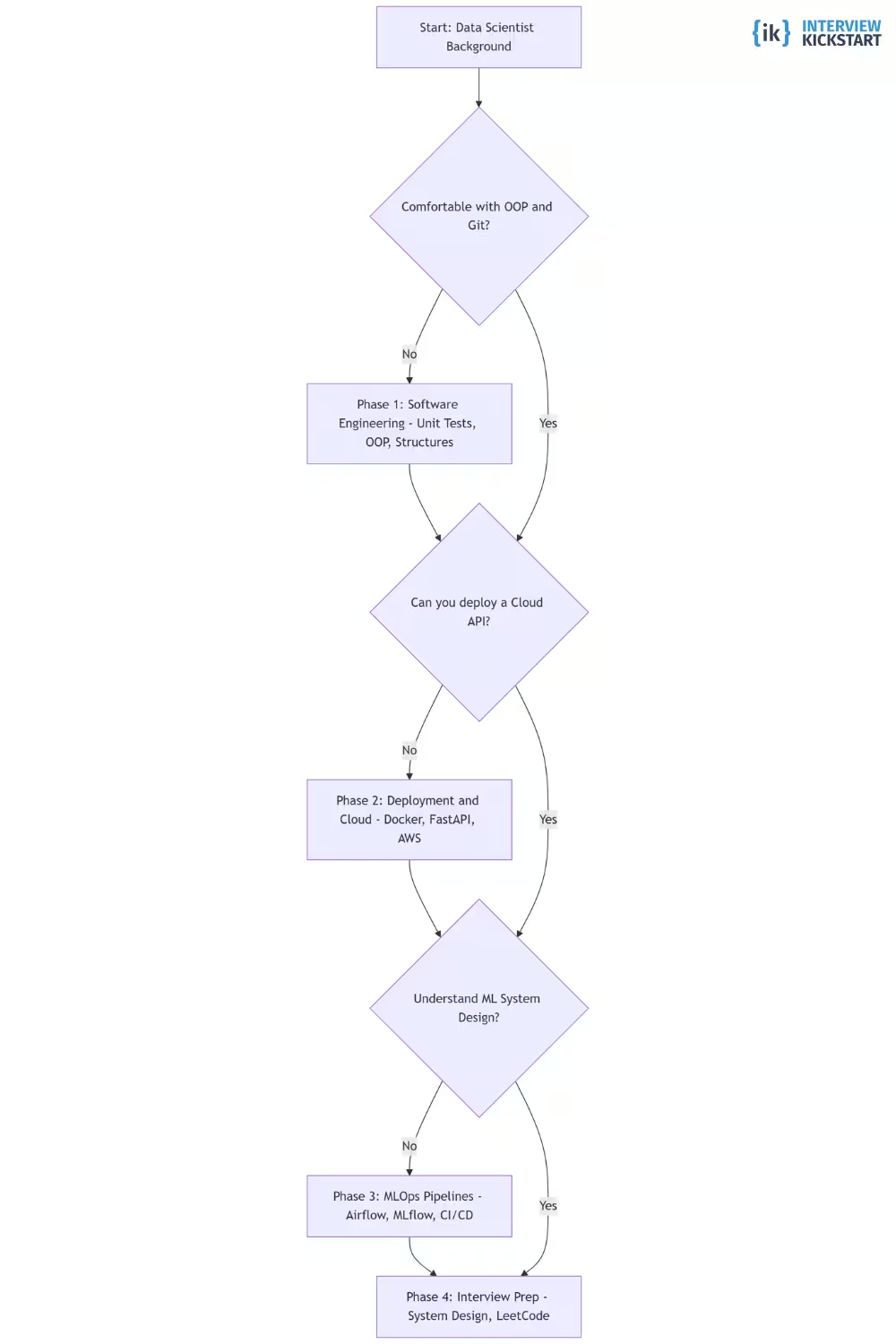

How to Prioritize What to Learn

Start with your Data Scientist background and ask yourself:

Are you comfortable writing modular, testable Python outside of notebooks?

- If No → Phase 1: Software Engineering Foundations

- If Yes → Move to next question

Can you deploy a model as an API or batch service in the cloud?

- If No → Phase 2: Deployment & Containerization

- If Yes → Move to next question

Do you understand ML system design beyond just model selection?

- If No → Phase 3: Production Pipelines & MLOps

- If Yes → Phase 4: ML System Design & Interview Prep

Phase 1: Software Engineering Foundations (3–4 Weeks)

This phase is about shifting from notebook-style experimentation to production-quality code. The goal is not to become a backend engineer. The goal is to become comfortable writing modular, testable, maintainable Python that can live inside a shared codebase.

You should focus on writing clean Python packages instead of long notebooks. Learn how to structure a project with separate modules, configuration files, and requirements. Practice writing unit tests using pytest. Get comfortable with Git workflows such as branching, pull requests, and resolving merge conflicts.

Strengthen core computer science basics such as data structures, complexity intuition, and how memory and data flow through programs. You are building engineering discipline, not new modeling depth. What to avoid at this stage is learning new ML algorithms or diving into advanced distributed systems. You already know enough modeling. The gap is engineering rigor.

Core outcome: You should be able to take one of your old notebook projects and refactor it into a clean Python package with tests and clear structure.

Phase 2: Deployment and Containerization (3–4 Weeks)

This phase moves you from “it runs locally” to “it runs anywhere.” Focus on wrapping a trained model inside a simple API using FastAPI or Flask. Learn how to containerize the application using Docker so that the environment is isolated and reproducible. Deploy it to a basic cloud service such as AWS EC2 or a managed platform.

You should understand how requests flow through the system, how dependencies are managed, and how logs are generated. Learn the difference between batch inference and real-time inference, and when each makes sense. The core concept to learn here is reproducibility and deployment flow. Understand how releases happen and what can break during deployment.

Avoid overcomplicating this phase with Kubernetes or advanced infrastructure. Simplicity with clarity is more important than complexity.

Core outcome: You should have a containerized ML service deployed in the cloud that can receive requests and return predictions reliably.

Phase 3: Production Pipelines and ML Lifecycle (3–4 Weeks)

This phase focuses on ownership beyond deployment. Build automated training and evaluation pipelines. Use orchestration tools like Airflow or GitHub Actions to trigger retraining workflows. Track model versions and experiments using MLflow. Implement monitoring to detect performance degradation and data drift.

You should understand how data flows from ingestion to feature engineering to training to inference. Think in terms of lifecycle rather than isolated scripts. The core concept here is systems thinking. You are designing a repeatable, maintainable process that can evolve over time. Avoid chasing perfection. Your goal is to show that you understand lifecycle ownership, not to build enterprise-grade infrastructure.

Core outcome: You should have an automated pipeline where models can be retrained, versioned, deployed, and monitored.

“Right now, leveraging an AI coding assistant is the present and future, and it’s hard to imagine it won’t come up somewhere in the interview process.”

Phase 4: ML System Design and Interview Readiness (Ongoing)

This phase prepares you for interviews and real ownership. Practice designing end-to-end ML systems. Be able to discuss trade-offs such as batch versus real-time inference, scaling models versus scaling data, monitoring strategies, and rollback plans. Think about failure handling and how systems degrade gracefully.

Interviewers are evaluating pragmatism. Can you balance speed with sophistication? Can you design an MVP that evolves rather than overengineering upfront? Also prepare for recruiter screens and behavioral rounds. Collaboration, growth mindset, and clarity matter. Technical skill alone is not enough.

If time is limited, prioritize deployment, lifecycle ownership, and system design discussions. These contribute most directly to clearing ML Engineering interviews.

Core outcome: You should be able to confidently design, explain, and defend an end-to-end ML system with realistic production constraints.

Folks sometimes get frustrated with initial recruiter screens where they may be speaking with a non-technical recruiter. It is important to remember that the recruiter often has culture fit in mind as much as technical fit, and they are thinking about how collaborative the candidate comes off as, and how much of a growth mindset they seem to possess. Be kind and curious.

4. Projects Professionals Should Build for Machine Learning Engineer

If you are transitioning from data scientist to machine learning engineer, your projects matter more than certificates. Many candidates make a critical mistake here. They showcase strong modeling work in Jupyter notebooks — high accuracy, clean EDA, nice visualizations. That shows that you are a good Data Scientist, not a candidate switching to machine learning engineer. ML Engineering projects must demonstrate end-to-end ownership, production constraints, deployment, monitoring, and iteration.

What to Avoid

Before discussing what to build, let’s be clear about what hurts your profile:

- Static notebook projects with no deployment

- Kaggle competitions with no production context

- Models trained once and never monitored

- Projects with no defined business metric

These prove modeling skills. They do not prove engineering readiness.

Reference Project: Real-Time Drift Monitoring System

If you build only one serious project, build this.

Problem Statement: Deploy a customer churn prediction model that serves real-time predictions and automatically alerts when input data distribution shifts significantly.

Components to Build:

- Prediction Service: Build a FastAPI application that accepts structured input data and returns model predictions. Add input validation, structured logging, and proper error handling. This demonstrates API development, model serving, and production readiness.

- Containerization: Package the entire application using Docker. Ensure dependencies are isolated and the service runs consistently across environments. This demonstrates environment management and reproducibility.

- Traffic Simulation: Create a script that sends thousands of requests to your API to simulate real-world usage. Measure latency and throughput under load. This demonstrates performance awareness and basic load testing.

- Drift Detection: Implement a monitoring component that compares live input data distributions against training data. Use statistical tests or monitoring libraries to detect significant shifts. This demonstrates lifecycle thinking and production monitoring.

- Alerting and Failure Handling: Configure alerts when drift thresholds are crossed or when system failures occur. Simulate failure scenarios and handle them gracefully. This demonstrates reliability engineering and ownership beyond deployment.

Final Output: A deployed, containerized ML service that serves predictions, monitors input data quality, detects drift, and generates alerts when issues arise. This project proves you can build and maintain an end-to-end production ML system rather than just train a model offline.

Alternative Project: Scalable Batch Inference Pipeline

If you prefer working with large datasets and offline systems rather than real-time APIs, this is a strong alternative project. The goal here is not to build a more accurate model. The goal is to prove that you can process data reliably at scale and design systems that do not break under load.

Focus: Process large datasets efficiently and repeatedly in a production-style setup. Instead of serving single predictions via an API, you design a scheduled pipeline that reads large volumes of data, runs inference in batch mode, and writes results to downstream systems such as a database or data warehouse.

What to Build:

- A scheduled workflow using a tool like Airflow or Prefect to orchestrate the pipeline

- A job that reads a large dataset (ideally several gigabytes) and runs inference in chunks

- Proper logging, error handling, and retry logic

- Output written to a database or storage system with clear schema design

- Monitoring signals for job failures, latency spikes, or data quality issues

You should simulate realistic constraints. For example, assume the job runs nightly and must complete within a fixed time window. Think about what happens if the job fails halfway. How do you resume safely without duplicating outputs?

Defining the problem and success metric, developing the evaluation dataset, engineering the training data and pipelines, and deploying.

5. Interview Preparation for Candidates Switching From Data Scientist to Machine Learning Engineer

When transitioning from data scientist to machine learning engineer, the interview can feel unexpectedly challenging. The evaluation shifts. Interviewers are not just testing modeling ability. They are testing production thinking, system design, and engineering ownership.

Many Data Scientists walk in confidently because their experimentation and modeling skills are strong. Yet they struggle because they underprepare for deployment discussions, system trade-offs, and real-world production constraints that ML Engineering interviews emphasize heavily.

How to Prepare for Machine Learning Engineer Interviews

Preparation for ML Engineer interviews looks very different from preparing for Data Scientist roles, even though many candidates underestimate this shift. Most Machine Learning Engineer interviews combine coding, production ML concepts, and system design.

Many Data Scientists assume that strong modeling experience will carry them through. But interviews often go deeper. You are evaluated not just on how well you can build a model, but on how you deploy, monitor, scale, and debug it in production. Many interviews explicitly test whether you can explain why a model’s performance dropped, when to retrain, how to handle drift, and how to balance latency with accuracy.

A Practical Interview Preparation Timeline Typically Looks Like This:

- First 2–3 weeks: Strengthen coding fluency. Practice data structures and algorithms under time pressure. Focus on writing clean, correct code while explaining your reasoning clearly.

- Next 3–4 weeks: Deepen production ML understanding. Practice explaining deployment workflows, monitoring strategies, model evaluation choices, drift handling, and retraining logic in simple language.

- Final phase: Focus heavily on ML system design. Practice designing end-to-end ML systems. Be ready to discuss batch versus real-time inference, scaling constraints, logging, observability, rollback strategies, and ownership trade-offs.

Typical Interview Process and Structure for Machine Learning Engineer

Across companies, the machine learning engineer interview process follows a fairly repeatable structure, even though the number of rounds and emphasis may vary. For candidates transitioning from data scientist to machine learning engineer, interviews evaluate production ownership and system thinking just as heavily as modeling depth.

Most processes include a recruiter screen (background, motivation for transition, role alignment), a technical screen (algorithmic coding and baseline ML engineering readiness), and an interview loop with multiple 45–60 minute rounds evaluating different dimensions of ML engineering capability.

| Stage | What This Stage Evaluates | What Candidates Are Usually Tested On |

|---|---|---|

| Recruiter Screen | Role fit, motivation, transition clarity | Why move from Data Scientist to MLE, production exposure, collaboration style, growth mindset |

| Technical Screen | Baseline engineering and ML readiness | LeetCode-style coding, Python fluency, core ML reasoning beyond just model fitting |

| Interview Loop (Virtual or Onsite) | End-to-end ML engineering capability | Coding, ML system design, production ML concepts, project deep dives, behavioral ownership |

Common Rounds in the Interview Loop Include: coding interviews focused on data structures and algorithms, ML system design interviews covering end-to-end ML pipelines, ML fundamentals or breadth rounds testing model reasoning and evaluation depth, production ML discussions centered on monitoring, retraining, and failure handling, project deep dives probing ownership and deployment experience, and behavioral interviews evaluating collaboration and decision-making.

| Round Type | Primary Focus | What Interviewers Look For |

|---|---|---|

| Coding (DSA) | Problem solving under time pressure | Correctness, efficiency, edge case handling, communication clarity |

| ML System Design | Designing production ML systems | Clear data → features → model → serving flow, scalability, latency trade-offs, failure handling |

| ML Fundamentals | Model understanding and reasoning | Bias-variance tradeoff, metric selection, feature trade-offs, drift analysis |

| Production ML | Operating ML systems over time | Monitoring, retraining triggers, rollback vs replacement decisions |

| Project Deep Dive | Depth of ownership | Ability to explain architecture, trade-offs, deployment challenges, and production failures |

| Behavioral | Collaboration and judgment | Working in engineering teams, conflict handling, prioritization, learning mindset |

6. Interview Questions for Machine Learning Engineer

Before getting into specific questions, it helps to understand how ML Engineer interviews are structured at a deeper level. If you are transitioning from data scientist to machine learning engineer, you might expect interviews to focus heavily on modeling techniques. In reality, they are designed to probe judgment, engineering maturity, and your ability to own systems beyond experimentation. Most companies are not trying to test trivia. They are testing whether you can reason about trade-offs, handle ambiguity, and operate ML systems responsibly in production.

1. Coding and Data Structures

This round evaluates raw problem-solving ability, engineering discipline, and clarity under time pressure. Even for ML roles, many companies begin with medium to hard algorithmic coding. The goal is not competitive programming brilliance. The goal is structured thinking and correctness.

- Implement an LRU cache.

- Given a stream of numbers, return the top K frequent elements.

- Design a rate limiter.

- Write code to efficiently merge large sorted datasets.

- Optimize a slow piece of Python code for memory and runtime.

2. ML Fundamentals (Model Understanding and Reasoning)

This domain evaluates whether you truly understand how models behave, not just how to train them in a notebook. Interviews push deeper — you are expected to connect theory to production impact. It is not enough to define bias-variance tradeoff. You must explain what happens when variance increases in a live system.

- Explain the bias-variance tradeoff in the context of a production recommendation system.

- How would you detect overfitting in production when labels are delayed?

- What happens if your training data distribution differs from inference data?

- How do L1 and L2 regularization affect model behavior differently?

- How would you diagnose a sudden drop in model accuracy?

- What are calibration techniques and when do they matter?

- How would you evaluate a ranking model differently from a classification model?

3. Production ML (Lifecycle, Monitoring, and Operations)

This is often the biggest gap when moving from data scientist to machine learning engineer. This domain evaluates whether you treat models as long-lived, versioned, production assets. In Data Science roles, you may train models and hand them off. In ML Engineering, you own the full lifecycle.

- How do you decide when a model is ready for production?

- What artifacts must be stored with a trained model?

- How do you compare a new model to an existing production model?

- What happens if the new model performs better offline but worse online?

- How do you implement canary deployments or shadow testing?

- How do you detect data drift? What types of drift exist?

4. ML System Design

This domain evaluates whether you can design an end-to-end machine learning system, not just train a model. You are expected to think in terms of data flow, latency, scalability, monitoring, and failure handling. It is not enough to say which algorithm you would use. You must explain how data moves from ingestion to features to training to serving, and what happens when something breaks.

- Design a real-time fraud detection system.

- When would you choose batch inference over real-time inference?

- How would you design a feature store for multiple ML models?

- How would you scale training for very large datasets?

- How do you handle cold start problems in recommendation systems?

- How would you ensure low latency under high traffic?

- What trade-offs would you make between model complexity and system reliability?

All of them on occasion, but system design often reveals whether a candidate has been working at scale.

5. Project Deep Dive

This domain evaluates depth of ownership and real-world experience. It is less about textbook knowledge and more about what you have actually built. Interviewers will often choose one of your projects and go deep. If you are transitioning from data scientist to ML engineer, this is where your production exposure becomes visible.

- Walk me through your most production-ready ML project.

- How did you design the training and inference pipelines?

- What trade-offs did you make and why?

- How did you monitor model performance over time?

- If you were redesigning this project today, what would you change?

6. Behavioral (Collaboration and Judgment)

This domain evaluates how you operate within an engineering organization. Machine Learning Engineers rarely work alone. They collaborate with backend engineers, product managers, and infrastructure teams. Interviewers are assessing judgment, prioritization, and communication under ambiguity.

- Tell me about a time you disagreed with a teammate.

- Describe a model that failed and what you learned.

- How do you balance shipping quickly versus improving model performance?

- How do you handle ambiguous or changing requirements?

- Describe a situation where you had to prioritize between technical debt and feature delivery.

7. Common Mistakes When Switching from Data Scientist to Machine Learning Engineer

Even technically strong candidates make predictable mistakes when transitioning from data scientist to machine learning engineer. Most of these are not about intelligence or lack of skill. They are about mindset, framing, and interview behavior.

Mistake 1: Focusing on Correct Answers Instead of Ownership

Many Data Scientists enter ML Engineering interviews thinking the goal is to give technically perfect answers. They explain modeling decisions clearly, define metrics precisely, and walk through training pipelines in detail. On the surface, this looks strong.

But ML Engineering interviews are not only evaluating correctness. They are evaluating ownership. If your answers consistently stop at model training and offline validation, interviewers begin to question whether you have truly owned systems in production. They want to hear about monitoring, retraining decisions, deployment trade-offs, and failure handling. The shift from data scientist to machine learning engineer requires expanding your frame from “does the model work?” to “does the system survive?”

Mistake 2: Overengineering in ML System Design

In ML system design interviews, some candidates respond with highly complex architectures from the very beginning. They introduce distributed training clusters, multiple streaming pipelines, and layered fallback systems before even validating the core problem.

What interviewers are truly evaluating goes beyond architecture diagrams. They are trying to understand whether you are pragmatic. Can you design something simple that works? Can you clearly justify trade-offs between speed, cost, and sophistication? Strong candidates typically start with a clear MVP and then describe how it would scale. Weaker candidates jump to enterprise-level complexity without grounding their decisions in constraints.

Mistake 3: Underestimating Collaboration Signals

One question that often sits quietly in the interviewer’s mind is whether they would actually enjoy working with you. Coming across as defensive, dismissive, overly rigid, or negative about past teammates can overshadow strong technical answers. ML Engineers operate within cross-functional squads that include backend engineers, product managers, and infrastructure teams. Interviewers are evaluating whether you will contribute positively to that environment.

Mistake 4: Avoiding Discussion of Failure

When asked about production issues or performance drops, some candidates try to downplay problems or avoid specifics. That instinct is understandable, but it often backfires. Strong candidates speak openly about what broke, how they diagnosed it, and what they changed afterward. They treat failure as a natural part of operating real systems. This demonstrates accountability and growth. Avoiding failure stories can signal lack of exposure. Owning them signals experience.

Mistake 5: Confusing Tool Usage with Systems Thinking

It is common to hear candidates list tools such as Docker, MLflow, or Airflow as proof of readiness. Tools are useful, but they are not the point. Interviewers care less about whether you have used a specific framework and more about whether you can explain why you used it, what trade-offs it introduced, and how it fit into the larger system. If you cannot explain how you ensured reproducibility, handled rollback, or monitored degradation, tool names do not carry much weight.

DevOps Engineer to MLOps Engineer Transition Guide

How to Switch from Data Engineer to ML Engineer?

How to Transition from Software Engineer to Machine Learning Engineer

How to Transition from Software Engineer to MLOps Engineer

Transition from Business Analyst to Data Scientist

Conclusion

The transition from data scientist to machine learning engineer is not a shortcut, and it is not just a title upgrade. The real shift lies in moving from building and evaluating models to owning systems that evolve, degrade, and require continuous judgment in production. Success in this transition depends less on knowing more algorithms and more on understanding lifecycle management, system reliability, and engineering trade-offs.

This path is best suited for Data Scientists who enjoy system ownership, ambiguity, and long-term responsibility. It fits those who want to move closer to production systems and customer-facing impact. It is not ideal for someone who wants to stay purely in experimentation mode or avoid deeper engineering rigor.

The transition from data scientist to machine learning engineer can unlock broader ownership, stronger compensation ceilings, and long-term technical growth. But it only works when pursued with clear expectations and disciplined skill development.

For many Data Scientists, the real challenge is expanding ownership beyond experimentation into production systems. Moving into ML Engineering means taking responsibility for how models are trained, versioned, deployed, monitored, and retrained over time. It requires thinking beyond model accuracy and toward system stability and long-term performance.

Interview Kickstart’s Advanced Machine Learning Program with Agentic AI is designed for professionals who already understand modeling fundamentals and want to build credible, production-grade ML system ownership. The program emphasizes real ML pipelines, deployment, monitoring, retraining strategies, and interview preparation aligned with how Machine Learning Engineers are actually evaluated.

If you are looking for a structured, end-to-end path to move from Data Science into ML Engineering without guessing what to learn next, start with the free webinar to understand how the program supports this transition.